|

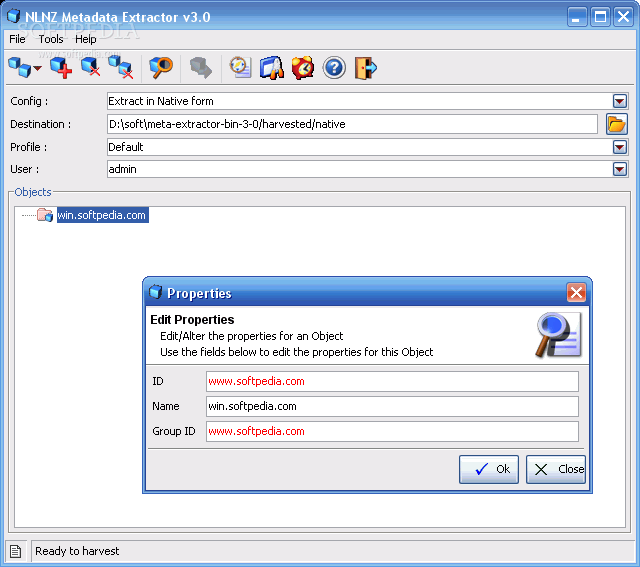

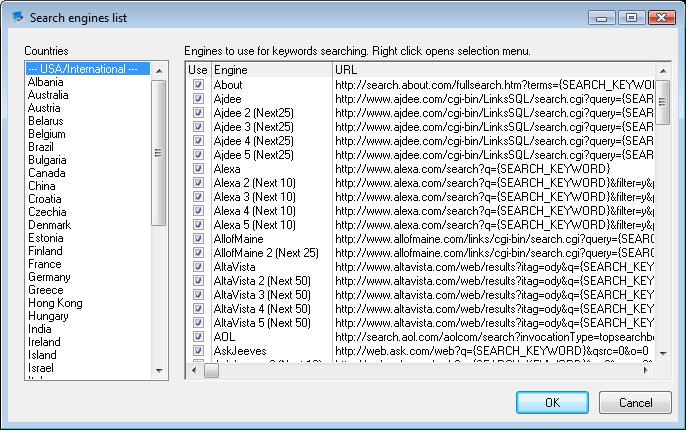

From the URL example above, you can see the duplication of TITLE tags, missing DESCRIPTIONS and more. Take a quick look at the results you get. If not, it’ll take a very long time for a large site, and it’s probably not that useful either. Set the depth of link spidering and total pages. There are 3 simple steps to the meta extraction process:Įnter the URL you want to spider. ( Not surprising, it’s highly “un-optimized”) Or, you can just buy a complete package, done for you – like in the example below. oDesk and are options you can look into. You could write a web interface or a desktop interface to spider pages on your website, or hire it out completely. It doesn’t matter if it’s C++, Visual Basic, PHP, Python/Perl, many controls are available. And, it’s even easier if you license (buy) existing source code with modules or code classes you integrate into your programming project. I wrote a simple one in Visual Basic a while back. If you are a programmer, you can write a web spider from scratch. Here is one simple way to get the meta information for each page from your website. How can you quickly view the SEO meta tag information for each page? You need a web spider. (Architecture of your website is for another post!). Especially if you have a large website with hundreds of pages.

However, if you have a site with many pages (I hope so!), and you’d like to quickly see if the SEO ‘best practice’ of having meta tags in place, what they are, and what to change – it becomes a lot of work. You’ll see actual code, external references, naming conventions, image use, meta tag use and much more. It is a quick view into your code foundation and layout. Just use the “View Source” or “Page Source” options, and you’ll see the code and structure. Summaryīy using these free tools and following these easy steps you’ve learned how extract a list of website pages, the page addresses (URLs) and the meta titles and descriptions.When looking at a website for on-page content structure in terms of HTML/code, you can easily find out by going to the page and looking it up within your browser. I love the SEOPress plugin as its a lot less aggressive with adverts and upsells than the most common WordPress SEO plugin.

This can then be opened up in Numbers/Excel/Google Sheets so you can work out the redirects, and also copy the metadata out to paste into the SEO data plugin of the new site. Let that scan all your pages and click ‘download CSV’ under the list, and you now have a list of all the website’s pages, with their URLs, meta titles and meta descriptions.

Go to and paste the list of URLs into the box on the left. Open the urllist.txt file up (Should automatically open in Notepad on Windows and Textedit on Mac) and copy all the URLs. Unzip these files to a folder and you’ll then see your sitemap in a variety of formats. Once it’s scanned all your pages, scroll down a bit and download the zip file containing all your sitemaps. Go to, paste your website address into the bar and click start. Luckily there are two free tools that make this quick and easy. This can be a time consuming task to do by hand! This can then be used to ‘310 redirect’ old pages to their new versions to help keep search engine rankings and avoid ‘page not found’ errors. When building a new version of a website, we often need to extract the current pages, the page addresses (URLs) and the meta title and description.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed